AI Visibility Reporting Proven Ways to Track Your Brand

Most brands have no idea whether they’re showing up in AI-generated answers. They’re optimising for Google rankings, checking backlink profiles, monitoring click-through rates and completely missing what’s happening in ChatGPT, Perplexity, Google AI Overviews, and every other AI search surface where their next customer might be looking.

AI visibility reporting fills that gap. It gives you a structured way to measure whether AI systems mention your brand, how often, in what context, and whether those mentions actually drive results. This guide covers the core metrics you need, the tools that track them, and how to build a reporting framework that makes sense for your clients or your own business.

What Is AI Visibility Reporting?

AI visibility reporting is the practice of measuring how frequently and favourably a brand appears in responses generated by large language models (LLMs) and AI-powered search engines.

Unlike traditional SEO reporting which tracks keyword rankings, organic traffic, and backlinks AI visibility reporting focuses on a different question: when someone asks an AI assistant about your industry, do you get mentioned?

This matters because AI search behaviour is fundamentally different from keyword-based search. Users ask conversational questions. The AI synthesises an answer from its training data and, in some cases, real-time retrieval. If your brand isn’t part of that synthesis, you’re invisible to a growing segment of your audience.

The GEO Connection

Generative Engine Optimisation (GEO) is the practice of optimising content so it gets cited by AI systems. AI visibility reporting is how you measure whether your GEO efforts are working. Without reporting, you’re optimising blind.

The Core AI Visibility Metrics You Need to Track

AI Presence Rate

AI Presence Rate measures how often your brand appears in AI-generated responses for a defined set of queries.

Practically, you run a set of target prompts through AI platforms things like “what’s the best

for [use case]?” and record how many responses include your brand name or a direct reference to your content.How to calculate it: (Number of AI responses mentioning your brand ÷ Total prompts tested) × 100

A 30–40% AI Presence Rate for branded queries is a reasonable starting benchmark. For competitive non-branded queries, even 10–15% is meaningful.

Track this weekly or biweekly. AI model updates can shift these numbers significantly, and you want to catch changes quickly.

Citation Authority

Not all AI mentions are equal. Citation Authority measures the quality of how your brand is referenced not just whether it appears.

High-authority citations look like this: the AI names your brand as a primary recommendation, links to a specific piece of your content, or positions you as the definitive source on a topic. Low-authority citations are vague references, secondary mentions, or appearances in a list of five other brands with no differentiation.

Scoring Citation Authority requires qualitative analysis. Build a simple 1–3 scale:

| Score | Description |

|---|---|

| 3 | Primary recommendation, direct citation, or authoritative reference |

| 2 | Mentioned as one of several options, with some context |

| 1 | Passing reference, no differentiation, or negative framing |

Average your scores across all mentions to get a Citation Authority index for any reporting period.

Share of AI Conversation

Share of AI Conversation (SoAC) is the AI equivalent of share of voice. It tells you what percentage of AI-generated mentions in your category belong to your brand versus competitors.

How to calculate it: (Your brand’s AI mentions ÷ Total AI mentions for all tracked brands) × 100

This metric contextualises your AI Presence Rate. A 35% presence rate sounds strong until you discover your main competitor has 70% and you’re fighting for scraps. SoAC gives you that competitive context.

Run your target prompts, record every brand mentioned across all responses, and tally the distribution. Do this monthly at minimum.

Tools for Tracking AI Brand Visibility

The tooling category for AI search tracking is still developing fast. Here are the platforms worth knowing about right now.

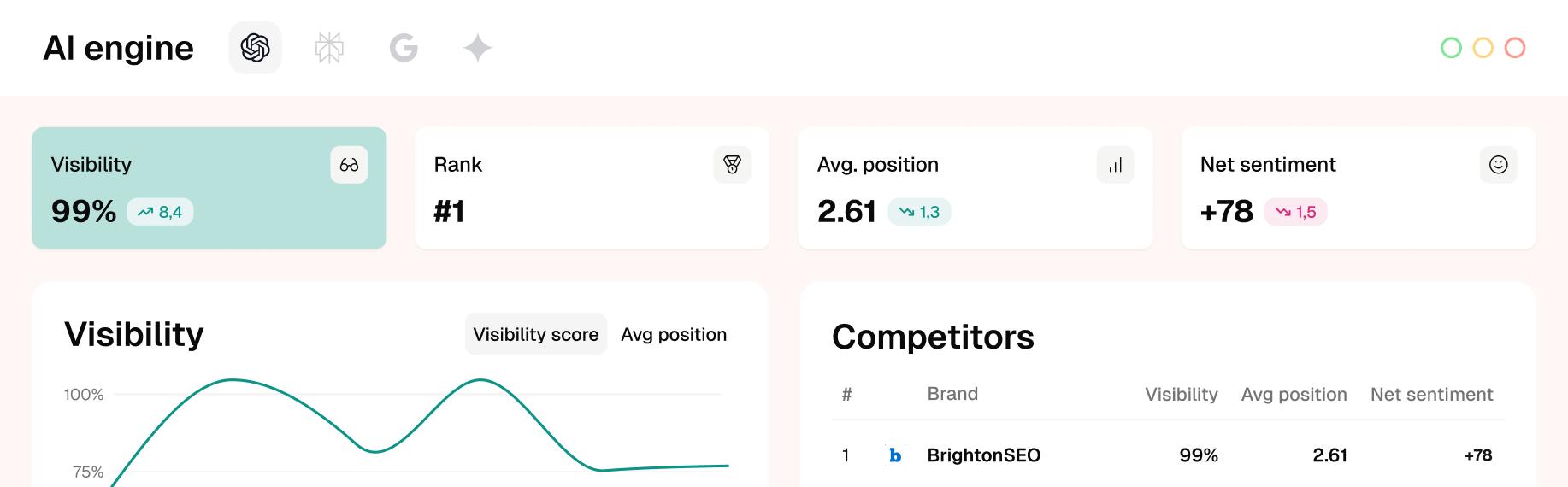

Goodie AI

Goodie AI is purpose-built for AI brand monitoring. It tracks how your brand appears across major LLMs including ChatGPT, Claude, Gemini, and Perplexity. You set up brand tracking, define competitor sets, and the platform runs systematic prompt testing to measure AI Presence Rate and competitive Share of AI Conversation.

It’s one of the more complete solutions available specifically for this use case, with reporting dashboards that make client presentations straightforward.

HallSearch (Hall)

Hall focuses on LLM visibility tracking with particular depth around citation source analysis. It helps you understand not just whether you’re being cited, but what content the AI is pulling from when it mentions you. That makes it useful for diagnosing GEO gap if the AI keeps citing an old blog post instead of your updated service page, you can see that and fix it.

XFunnel

XFunnel approaches AI visibility from a revenue attribution angle. It tracks brand mentions across AI search platforms and connects them to downstream conversion signals. If you’re trying to build an ROI case for AI visibility work which most agency clients will eventually ask for XFunnel’s attribution model gives you more to work with than pure mention counts.

For smaller budgets, you can run a basic AI visibility audit manually. Build a prompt set of 20–30 queries, run them through ChatGPT, Perplexity, and Google AI Overviews, and record results in a spreadsheet. It’s time-consuming but gives you a real baseline before investing in dedicated tools.

Building an AI Visibility Reporting Framework

1: Define Your Prompt Set

Your prompt set is the foundation of everything. These are the questions you’ll run through AI platforms to test visibility.

Include three categories:

- Branded prompts (“What does [your brand] specialise in?”)

- Category prompts (“Who are the best providers of [your service]?”)

- Problem-based prompts (“How do I [solve the problem your service addresses]?”)

Aim for 30–50 prompts. Review and update the set quarterly search behaviour and AI capabilities both shift, and stale prompts give you stale data.

2: Establish Your Baseline

Before you can show improvement, you need a starting point. Run your full prompt set across your target AI platforms and record:

- AI Presence Rate (branded and non-branded)

- Citation Authority scores for each mention

- Share of AI Conversation versus top 3–5 competitors

This baseline becomes the comparison point for every future report.

3: Set Reporting Cadence and Benchmarks

Weekly tracking makes sense for AI Presence Rate, it’s fast to measure and sensitive to model updates. Citation Authority and Share of AI Conversation work well on a monthly cadence.

Benchmarks to aim for (adjust based on category competitiveness):

| Metric | Emerging | Competitive | Strong |

|---|---|---|---|

| AI Presence Rate (branded) | <20% | 20–50% | 50%+ |

| AI Presence Rate (category) | <10% | 10–30% | 30%+ |

| Citation Authority Index | <1.5 | 1.5–2.2 | 2.2+ |

| Share of AI Conversation | <15% | 15–35% | 35%+ |

4: Connect to ROI

This is where most AI visibility reporting falls short. Mention counts don’t pay invoices. You need to connect AI visibility to business outcomes.

Practical approaches:

- Traffic correlation: Track whether increases in AI Presence Rate correlate with increases in direct or branded organic traffic (indicating users searching after seeing an AI mention)

- Survey attribution: Add “how did you hear about us?” to your lead forms “AI search” or “ChatGPT” is increasingly a real answer

- Branded search volume: Rising branded search volume often indicates AI-driven discovery; track this in Google Search Console alongside your AI metrics

XFunnel handles some of this automatically. For manual reporting, build a simple correlation table in your monthly report comparing AI visibility trends to traffic and lead trends.

Mistakes in AI Visibility Reporting

Using too few prompts. Ten prompts isn’t a meaningful sample. You need enough variety to catch how different question framings affect your visibility.

Ignoring competitor benchmarking. Your AI Presence Rate means very little without context. Always report SoAC alongside it.

Testing only one AI platform. ChatGPT, Perplexity, and Google AI Overviews behave differently. A brand strong in one may be invisible in another. Test all three at minimum.

Reporting mentions without quality scoring. A passing mention in a list of ten competitors is very different from a primary recommendation. Always layer in Citation Authority.

FAQs

What is AI visibility reporting?

AI visibility reporting is a structured process for measuring how often and how favourably a brand appears in AI-generated search results across platforms like ChatGPT, Perplexity, and Google AI Overviews. It tracks metrics like AI Presence Rate, Citation Authority, and Share of AI Conversation to give brands a clear picture of their LLM visibility.

How is AI visibility different from traditional SEO rankings?

Traditional SEO rankings measure position in a list of links. AI visibility measures whether you’re mentioned in a synthesised answer no link required. Users often don’t click through at all; the AI’s response is the answer. That’s why tracking AI mentions separately from keyword rankings matters.

Which tools are best for tracking AI brand mentions?

Goodie AI, Hall, and XFunnel are currently among the most focused tools for AI brand monitoring. For budget-conscious teams, manual prompt testing across ChatGPT, Perplexity, and Google AI Overviews is a viable starting point.

How often should I run AI visibility reports?

Track AI Presence Rate weekly, it shifts frequently as AI models update. Run Share of AI Conversation and Citation Authority analysis monthly. Full competitive audits quarterly.

What’s a good benchmark for AI Presence Rate?

For branded queries, a 30–50% AI Presence Rate is a reasonable competitive baseline. For non-branded category queries, 10–30% is strong. These figures vary significantly by industry and competitive density.

Can I measure ROI from AI visibility?

Yes, though it requires combining AI metrics with downstream data. Track correlations between AI Presence Rate improvements and branded search volume, direct traffic, and lead attribution. Tools like XFunnel offer more direct attribution modelling.

How does AI visibility reporting relate to GEO?

Generative Engine Optimisation (GEO) is the strategy; AI visibility reporting is the measurement. You use reporting to determine whether your GEO content optimisations, structured data, authoritative sourcing, clear entity signals are actually increasing your presence in AI-generated answers.

What prompts should I use for AI visibility testing?

Use a mix of branded queries (“what does [brand] offer?”), category queries (“best [service] providers”), and problem-based queries (“how do I [solve your core problem]?”). Aim for 30–50 prompts updated quarterly.

Key Takeaways

- AI visibility reporting tracks brand presence across LLM-powered platforms separate from and complementary to traditional SEO reporting

- The three core metrics are AI Presence Rate, Citation Authority, and Share of AI Conversation

- Tools like Goodie AI, Hall, and XFunnel automate tracking; manual prompt testing works for smaller budgets

- A solid reporting framework starts with a defined prompt set, establishes a baseline, sets a consistent cadence, and connects mentions to ROI signals

- Always benchmark against competitors, your raw AI Presence Rate means very little without Share of AI Conversation context

If you’re building out AI search tracking for your clients and need a white label reporting structure that holds up, 7thclub.com’s white label SEO services include GEO reporting frameworks you can deploy under your own brand. Get in touch to see how it works in practice.